Are you interested in LED Wall or Green Screen Virtual Production?

Then you’ll come across camera tracking. And in this blog I will try to explain the concept and show you the different options you have available to track your camera.

My name is Richard and I’ve been a video and live broadcast producer for the past 15 years. The past three years I’ve been deep into Virtual Production and have been running my own Virtual Production studio in Sweden called Virtual Star Studios.

One of the huge obstacles to overcome is to get a reliable camera tracking system working. This is not hard if you have a big budget, but what if you are like me an indie producer? What are your options?

The whole principle of LED and Green Screen is to sell an illusion. And the best way to do that is to immerse the viewer inside another world or location. To really be able to sell the effect the moment of the camera is key. And to be able to have the 3D background move in sync with the real camera moment is to track it.

Camera tracking is a method of matching the virtual camera to the real camera.

Traditionally this have been done in post by using softwares like After Effects, PF Track and others to calculate the camera moment after the shooting.

But to be able to this in real time you need to have real time camera tracking system.

In order to get the virtual camera to behave exactly like the real camera you need four data sets.

Capture the position and rotation data as accurate as possible

Transfer the exact values of the focus, iris and zoom data to the computer. Often via Lens encoders or cable directly from the lens.

All lenses have specific unique characteristics which you need to map and apply to the virtual camera

The virtual camera and the real camera needs to be synchronized. This is often made possible via an external sync-machine that ties all equipment together in a studio environment.

There are different types of camera tracking available. The most common are

Inside Out

This method uses an onboard camera or device looking at outer markers: be it reflective IR-markers or organic markers in the room/area.

Outside In

This is pretty much the opposite; you have several cameras lookin in at the camera tracking area and following markers that you put on the real camera.

The market is really growing and there starts to be many good options out there. Here are a few that I know of. Let me know in the comments if you want me to add more.

The most famous one and for what I know one of the oldest in the game.

Mo-Sys have a wide arrange of different tracking solutions but the most used is the “StarTracker”. It’s an Inside Out system that builds on the foundation that you put up small IR-reflective stickers in the ceiling and then you have onboard the camera an small camera with infra-red light shooting up and always tracking the “Stars”. They have the complete system with a computer that handles the genlock, lens encoders, a sturdy calibration system for the lenses and a good support team. Most of the big TV-stations and film productions use this.

Website: https://www.mo-sys.com/

Also another famous one and well used in the industry. It builds on the same concept where you put up infrared stickers in the ceiling and then have a camera with infrared light looking at the “Stars” to calculate the position and rotation. They have the complete package as well.

Website: https://stype.tv/

This is the first system in the list thats not using the “stars” to calculate it’s position and rotation but more resemblance the post-production trackers where it looks at different organic markers in room/near area. It’s using two cameras for stereoscopic calculation of it’s position. Pretty much the same way you and I calculate depth. This makes it very useful in a vast different situations; for example outdoors.

Website: https://www.ncam-tech.com/

OptiTrack is a outside in system that’s also very well used in the industry. It’s based on having multiple cameras looking into the tracking space and putting markers on the camera. This is most used for motion capture but works very well in a tracking situation as well.

Website: https://optitrack.com/

Similar to Optitrack this is an outside in system. They stem from using their technology in a medical environment which needs high precision. So it fits very well in our line of industry as well. They’re also from Sweden like myself. Go team!

Website: www.qualisys.com

Well I guess you can tell all these systems are very good at doing their job. But they’re also expensive. So what do you do if you’re a indie-producer or just getting started in this?

Well we look at options that aren’t as expensive but still does the job good.

When I started out experimenting and the whole reason I’ve been able to evolve and build my virtual production company is thanks to this little fella. It’s not meant to be used in this way it’s a VR product. But in the right circumstances it can produce really good results. But it’s not reliable in a real production environment.

This is the tracking system we use in our studio and we are more than happy with it. Especially since we last used the Vive. Antilatency have a few different options that is mounting it in the ceiling, using the floor mats but they also have a solution for mounting it in a truss.

We have the ceiling version. This system builds on the exact position of the IR markers. And they need to be put in a precise pattern. On the camera we place a little wide angle camera which reads the position of the IR markers. And on the little camera they also have an IMU which detects rotation and acceleration. Very similar to what you have in a regular iPhone. This all together is a great package for precise camera tracking. Of course you only get the position and rotation data with this so you need to add some more tools to be able to use it in a live studio environment.

Website: www.antilatency.com

This is a product made by Marwan Rassi and it’s based around the very cheap Intel T265 stereo camera which reads depth. Marwan has built a full set of software around this camera to e able to calibrate and track a camera in real time. I tried this system and it works very good.

Website: https://retracker.co/

Similar to ReTracker Vanishing Point uses the Intel cameras as a base for their camera tracking system. But they’ve also built a very mature software and hardware system around to make it a viable solution in a broadcast setting.

Website: https://vanishingpoint.xyz/

As I told you earlier Antilatency is just giving you the position and rotation data so to have a painless experience you could look into some additional hardware and software.

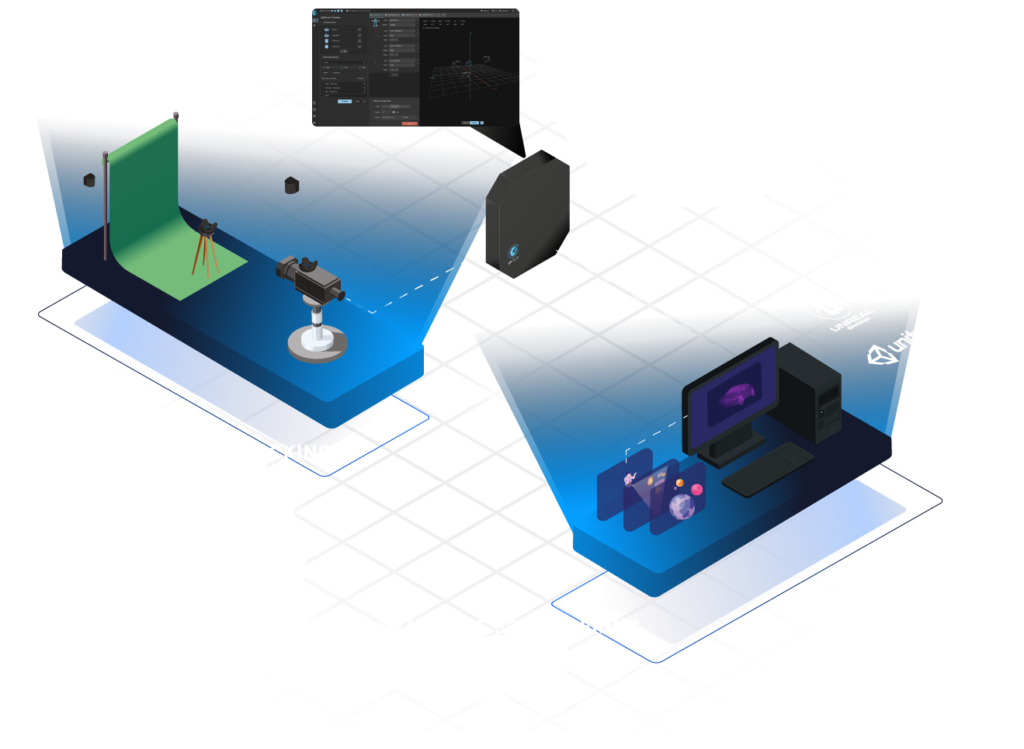

This is like a little computer that you put on your camera and then route all your video and tracking signals into this computer. Then you use the Camix software to calibrate and sync everything up before sending it into Unreal or Aximmetry. Camix also comes with their own set of Lens Encoders.

Website: https://camix.tech/

Eztrack is similar to Camix a full on system which calibrates, syncs and uses lens encoders to purify the data before sending it over to Unreal or Aximmetry.

Website: https://eztrack.studio/

Glassmark is one of the first indie lens encoders I know of and is used to get the zoom and focus data inside Unreal or Aximmetry. Works really well and is something I’ve been using for the past year.

Website: https://www.loledvirtual.com/indiemark/

So this has been a little walkthrough of all the main contenders on the camera tracking arena. I’m quite happy that there starts to be a lot of options for us Indie-producers like the Antilatency. Of course you need to complement it with additional hardware but for a pricepoint that’s less than 1/10 of the big players it could be a worthwhile investment.

Most certainly if you are a big production house and can afford the Mo-Sys or Stype that would be a huge timesaver and robust solution.

What systems did I miss? And what systems do you prefer?

Let me know in the comments.